Documentation Index

Fetch the complete documentation index at: https://docs.adcontextprotocol.org/llms.txt

Use this file to discover all available pages before exploring further.

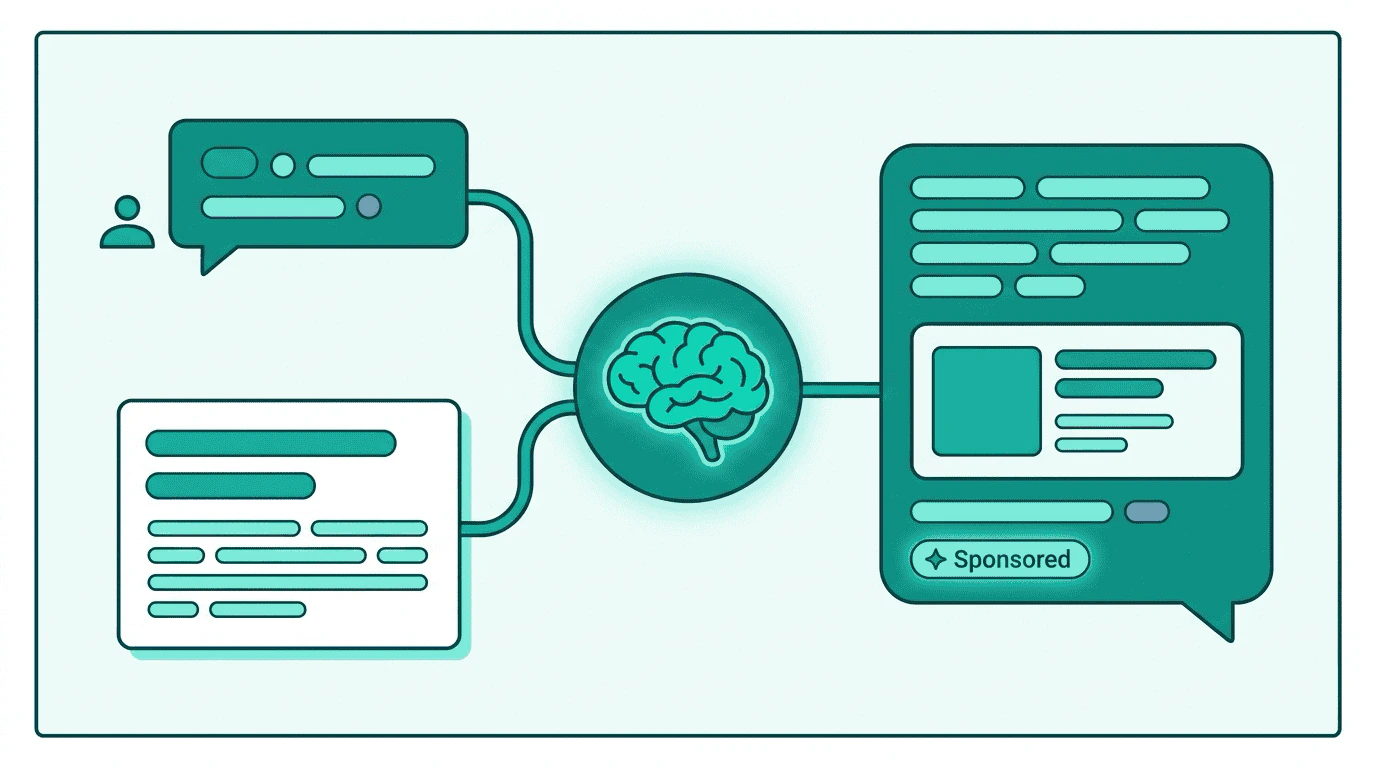

The core design principle

TMP solves this with a separation of concerns: the protocol decides what sponsored content is available and relevant, and the platform’s LLM decides how to present it. The buyer never touches the user experience. The platform never builds custom ad logic. This is what makes TMP a mediation protocol rather than an ad injection system. Multiple buyer agents submit offers through a standard interface, and the platform retains editorial control over how — and whether — recommendations appear.Step 1: The demand problem

Every ad surface before AI assistants works the same way: a user loads a page, a bid request broadcasts context to buyers, an ad server renders a creative in a defined slot. AI assistants break all three assumptions:- No defined slot — sponsored content is woven into the response text

- No bid request — the conversation is private and ephemeral, not a crawlable URL

- No ad server — the platform’s LLM generates the response

Step 2: Bringing demand to the context

- Property type:

ai_assistant - Placement:

chat-inline-recommendation— a conversational context where the LLM can incorporate sponsored recommendations

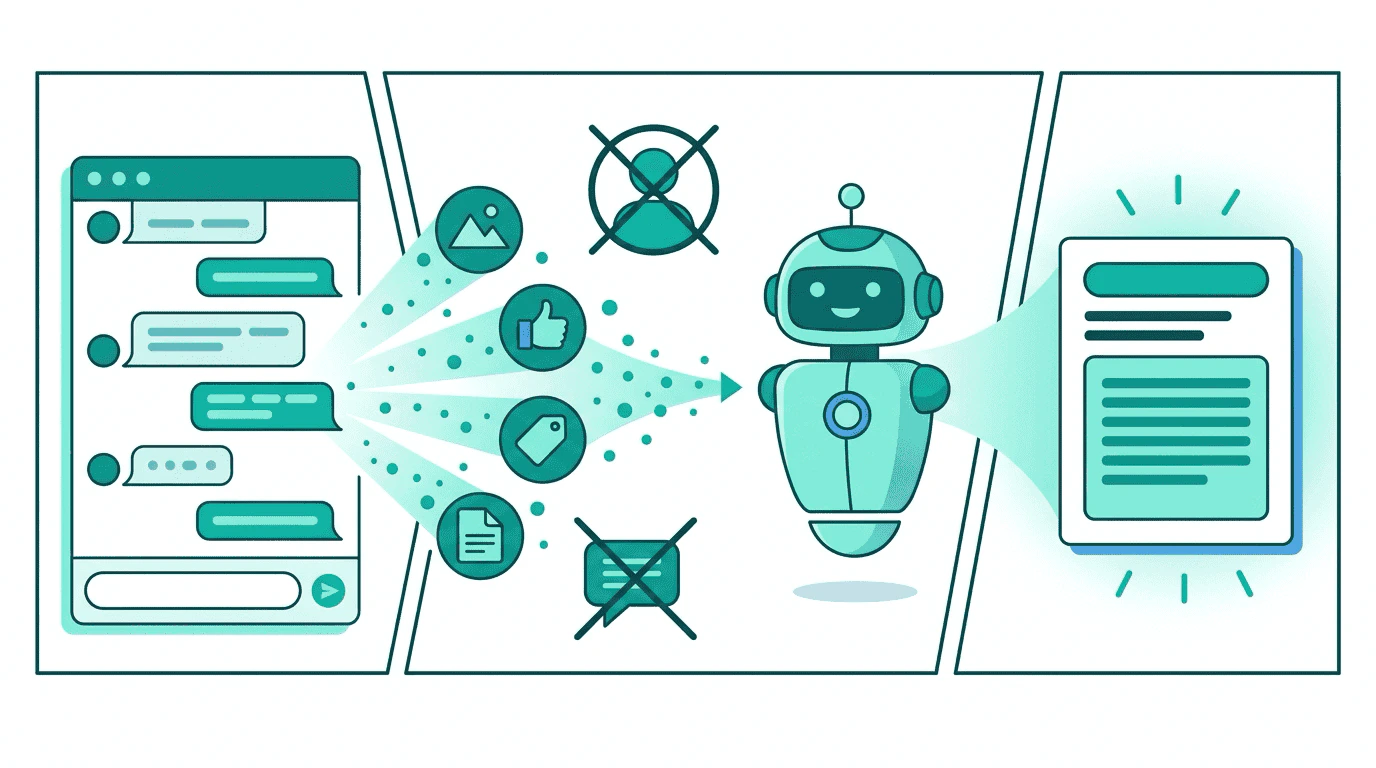

Step 3: Classified signals instead of bid requests

Context Match request for a conversation turn

Context Match request for a conversation turn

artifact_refs — conversation turns are ephemeral. The context_signals carry the classified output. The summary field is especially useful for LLM-native buyers that evaluate relevance semantically. A platform that operates in a trusted execution environment could alternatively send the full conversation as an artifact — the publisher controls the disclosure level.| Integration level | What the offer contains | How the platform uses it |

|---|---|---|

| Activation only | package_id | Platform’s ad server activates a line item (for platforms with traditional ad serving alongside AI) |

| Brand mention | package_id + brand + summary | LLM uses summary to generate a natural brand mention |

| Full recommendation | package_id + brand + summary + creative_manifest | LLM weaves product details from the manifest into the response |

| Catalog steering | package_id + brand + creative_manifest with catalog items | LLM recommends specific products from a synced catalog |

Context Match response with inline creative manifest

Context Match response with inline creative manifest

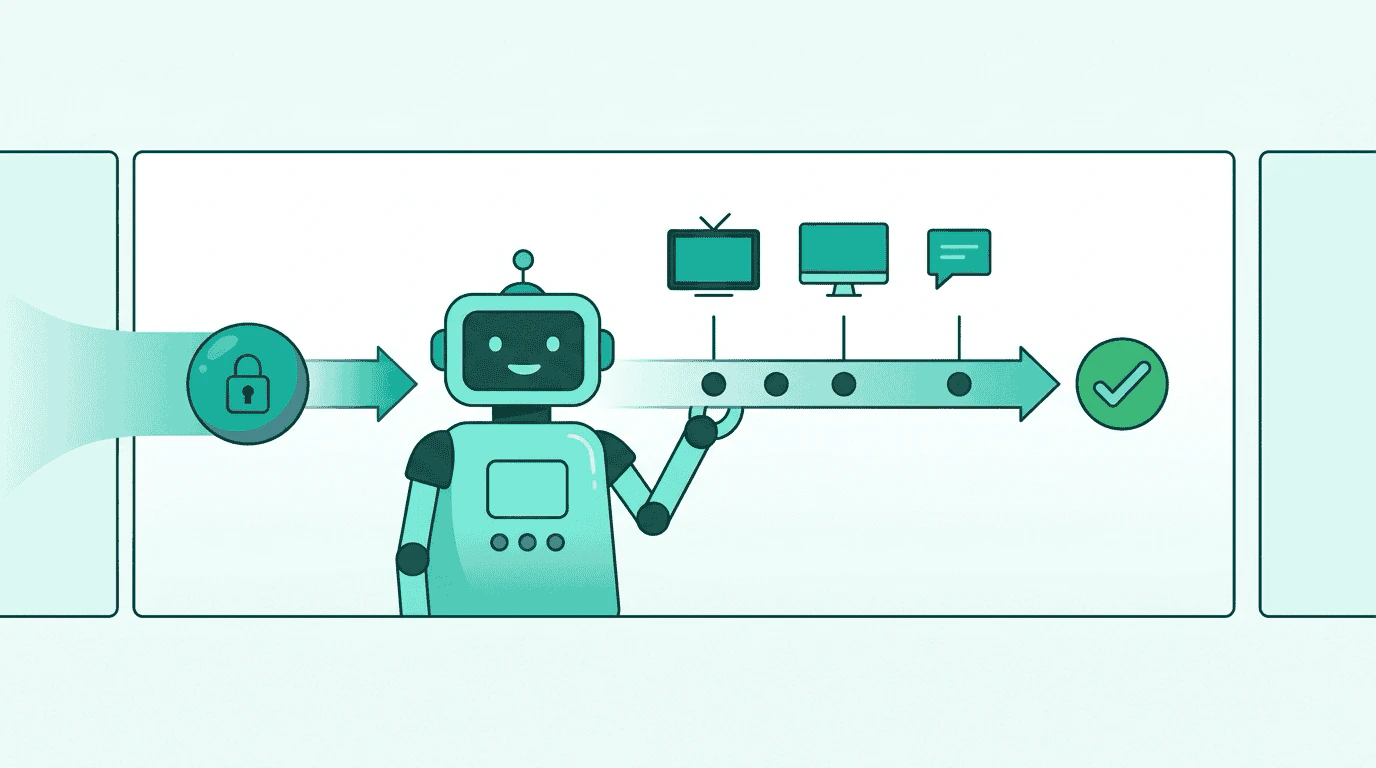

summary helps the platform judge relevance before deciding whether to incorporate the offer. The body gives the LLM factual product details it can weave into a natural response.Step 4: Frequency caps cross every surface

Step 5: The platform controls the experience

“For rocky terrain with good ankle support, you’ll want a shoe with a rock plate and higher collar. The Trail Pro 3000 is designed exactly for this — it has a full rock plate to protect against sharp rocks and an ankle-height collar for stability on technical terrain. The Vibram outsole with 4mm lugs gives you solid grip on loose surfaces. You might also look at…” Sponsored recommendation from Acme OutdoorThe LLM didn’t copy the creative manifest verbatim. It wove the product details into a natural recommendation that addressed the user’s specific question. The platform applied its own editorial policy — a sponsored content label, natural integration, and continuation with non-sponsored alternatives. Regulatory requirements around AI-generated sponsored content (FTC disclosure, EU AI Act) are the platform’s responsibility, enforced through their LLM integration, not the protocol.

Step 6: What this unlocks

| Without TMP | With TMP |

|---|---|

| Single ad network or no monetization | Any buyer agent can compete for relevance |

| Proprietary integration per advertiser | Standard protocol, open demand |

| No frequency caps across surfaces | Shared exposure store, same caps everywhere |

| Platform builds ad logic | Platform controls editorial integration only |

| Ad network controls the experience | Platform LLM controls presentation |

Multi-turn conversations

Each user message triggers a fresh Context Match evaluation. The platform decides when to re-evaluate — recommended triggers are topic shift (detected by the platform’s classifier), explicit product interest (“tell me more about…”), or session timeout (more than 5 minutes of inactivity). Platforms may cache the most recent Context Match response and skip re-evaluation for follow-up questions on the same topic. This reduces latency and provider load without affecting ad quality. When multiple buyers return offers for the same conversation turn, the platform ranks by relevance to the conversation — not by price. This is mediation, not an auction. An impression occurs when the LLM incorporates the creative manifest into its response. Follow-up questions about the recommended product (“where can I buy those?”) are engagement events, not new impressions. Latency. TMP’s sub-50ms round-trip is hidden within the LLM’s generation time (typically 1-3 seconds). The platform sends the Context Match request while preparing the LLM prompt, so TMP adds no perceptible delay to the user experience.Go deeper

TMP for AI assistants

Technical reference for AI assistant integration — request/response formats, context signals, activation patterns.

Context and identity

How the two-operation model works across all surfaces, with concrete examples.

TMP overview

Cross-publisher frequency capping walkthrough — how TMP solves the execution gap.

Specification

Authoritative message types, field tables, and conformance requirements.